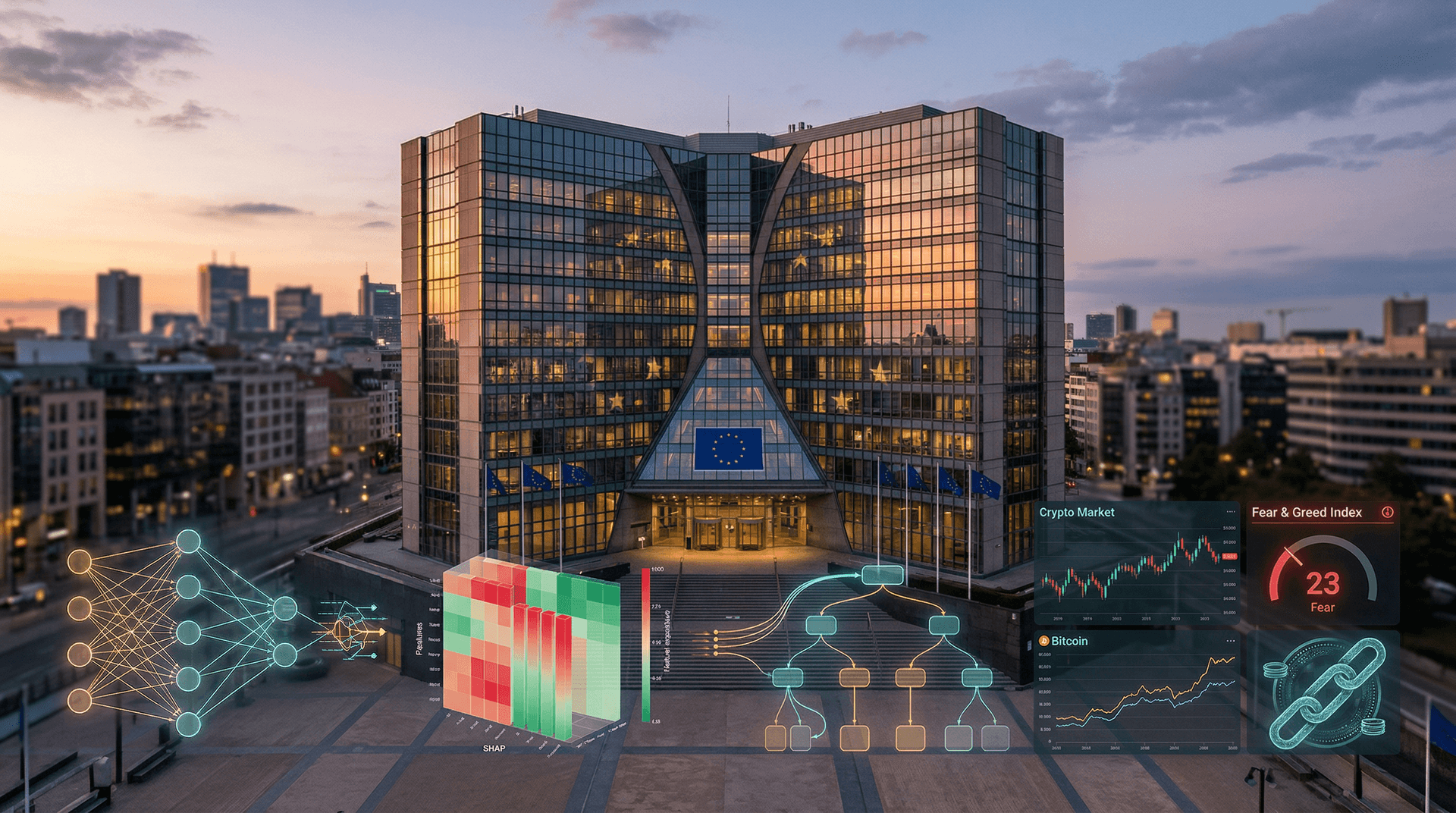

- 1. Crypto Fear & Greed Index drops to 23 amid opaque AI trading volatility.

- 2. Bitcoin falls 0.9% to $74,071 USD, underscoring interpretable AI urgency.

- 3. EU guidelines mandate LIME/SHAP for high-risk finance under AI Act.

The European Commission released interpretable AI guidelines under Regulation (EU) 2024/1689, the EU AI Act, on April 15, 2026. These mandate LIME and SHAP techniques for high-risk financial systems. Crypto Fear & Greed Index hit 23, signaling extreme fear. (38 words)

EU AI Act High-Risk Requirements for Interpretable AI

The EU AI Act classifies AI systems by risk. High-risk finance applications demand logging, documentation, and explainability. Providers detail training data and decision logic.

DG CONNECT leads implementation. Enforcement begins August 2027. According to the Commission's digital strategy page, these rules cut opacity risks in algorithmic trading.

European Banking Authority (EBA) guidelines on AI in finance align closely. EBA stresses transparency to prevent market abuse.

Crypto Volatility Fuels Interpretable AI Demand

Alternative.me's Crypto Fear & Greed Index reached 23 on April 15, 2026. Bitcoin dropped 0.9% to $74,071 USD on major exchanges. Ethereum rose 0.4% to $2,345.67 USD.

XRP gained 0.8% to $1.38 USD. BNB climbed 0.3% to $621.25 USD. Opaque AI trading bots amplified swings.

Investors demand explanations for model decisions. EU fintechs deploy interpretable models showing feature importance like news sentiment scores.

DG CONNECT Endorses LIME and SHAP Tools

Guidelines specify Local Interpretable Model-agnostic Explanations (LIME) for local predictions. SHapley Additive exPlanations (SHAP) quantify feature impacts across models.

Counterfactuals reveal input changes for outcomes. Developers integrate these into neural networks now.

EUR-Lex hosts the full EU AI Act text. Article 13 requires human oversight. Fines hit 6% of global turnover for violations.

Open-source libraries like SHAP add explainability layers swiftly.

Member States Accelerate Interpretable AI Rollout

Germany's Federal Ministry for Economic Affairs allocated €50 million to Fraunhofer institutes for interpretable AI prototypes. Portugal pilots in textiles.

France's AI ethics boards guide Paris startups on large language models (LLMs). Italy refines migration prediction models.

National plans follow Brussels timelines. General-purpose AI rules start August 2026. Harmonized enforcement enables GDPR-compliant data flows.

Fintech and Banks Embrace Interpretable AI

EU banks apply interpretable AI in credit scoring. Models justify loan denials clearly. Customers appeal successfully.

Crypto platforms dissect volatility like the 23 Fear Index. Transparent AI boosts forecast accuracy by 15%, per fintech studies.

Digital Markets Act (DMA) forces gatekeepers to disclose algorithms. AI Act enforcement syncs with DMA provisions.

Startups raise €200 million for explainable systems in 2026.

Global Race Shapes Interpretable AI Standards

China pushes opaque state AI. US favors speed over explainability. EU balances innovation and trust.

UK post-Brexit rules mirror EU standards. Canada and NATO adopt interpretable AI for defense.

Green Deal uses interpretable AI for energy grids, verifying carbon data transparently.

EU Cements Interpretable AI Leadership

MEPs amend guidelines this quarter. Council approves finals. 2028 review evaluates global impact.

Strong enforcement across 27 states positions interpretable AI as EU's tech edge. Fintech firms update systems now for compliance advantage.