- 1. EU AI Act mandates interpretable AI for high-risk sectors from August 2026.

- 2. Crypto Fear & Greed Index at 23 as Bitcoin holds $74,515.

- 3. Finance, health, and crypto lead explainable AI adoption.

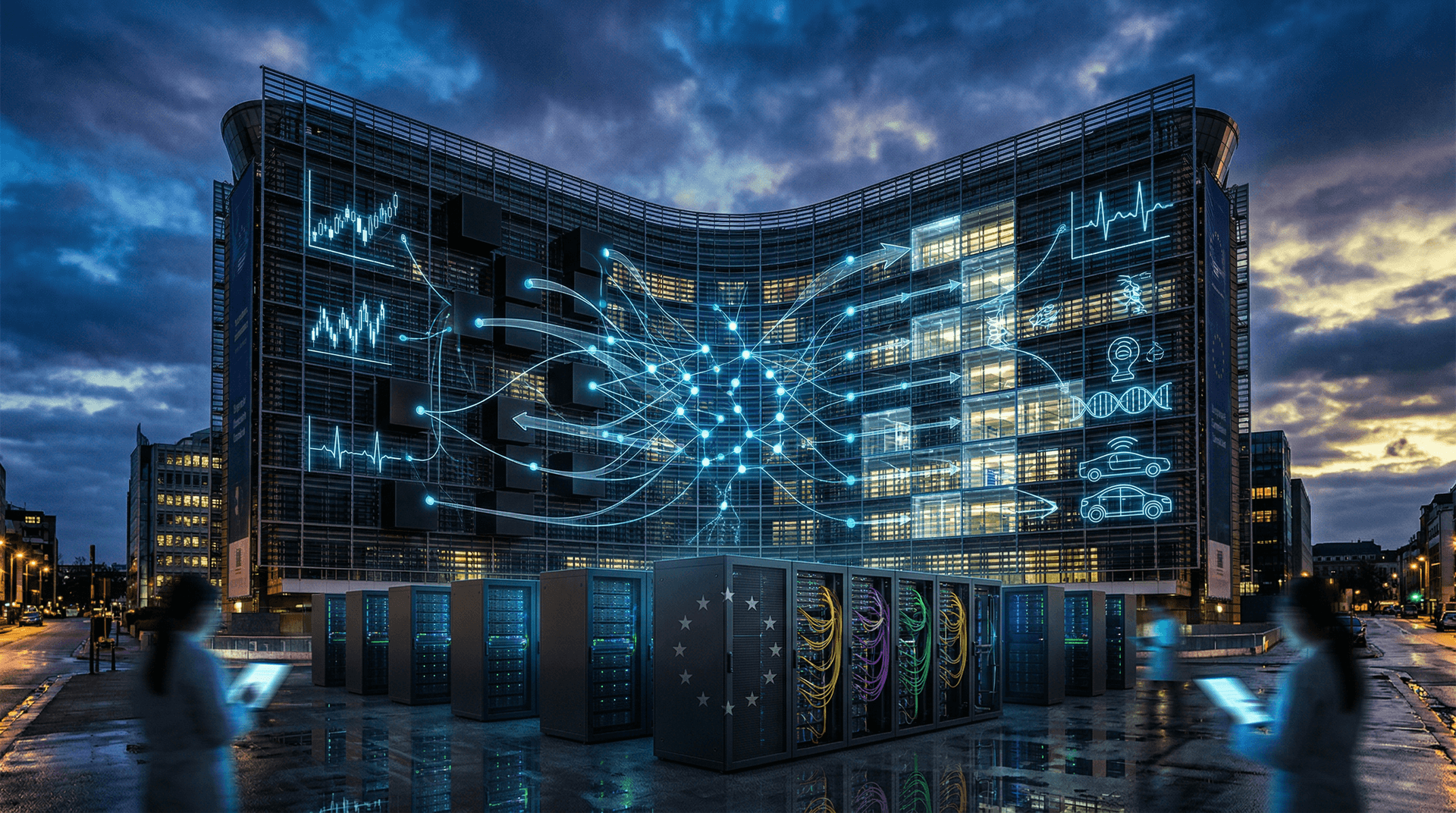

The EU AI Act requires interpretable AI in high-risk sectors from August 2, 2026. The European Parliament approved it March 13, 2024. The Council followed May 21, 2024. It enters force August 1, 2024. Bitcoin trades at $74,515 per CoinGecko.

The Crypto Fear & Greed Index stands at 23 per Alternative.me, signaling extreme fear.

EU AI Act Article 13 Drives Interpretable AI Transparency

Article 13 mandates high-risk AI providers ensure output transparency and decision logic understanding. The European Commission's DG CONNECT requires logging training data and risk management systems, per EU AI Act high-risk systems.

High-risk sectors include finance, healthcare, and critical infrastructure (Recital 42). Prohibited AI practices ban from February 2, 2025.

Finance firms accelerate interpretable AI adoption for credit scoring under Capital Requirements Regulation (CRR).

ESMA Pushes Interpretable AI in Finance MiFID Compliance

The European Securities and Markets Authority (ESMA) emphasized explainable AI in its 2020 AI/ML discussion paper. Opaque models face fines up to 6% of global annual turnover under GDPR and AI Act.

Techniques like SHAP and LIME disclose feature importance. JPMorgan Chase research shows SHAP reduces fraud detection errors by 15% while enhancing regulatory compliance.

Bitcoin holds at $74,515, up 0.5% on October 10, 2024 (CoinGecko). Ethereum trades at $2,332.60, up 0.3%. XRP rises 4.3% to $1.41 (CoinGecko).

Crypto volatility heightens interpretable AI demand. Models analyze on-chain data for sentiment and risk forecasting.

Crypto DeFi Integrates Interpretable AI Amid Fear at 23

Fear & Greed Index at 23 warns of panic selling (Alternative.me). Interpretable AI explains sentiment drivers like whale transactions and forecasts downturns.

DeFi platforms adopt explainable AI (XAI) for risk assessment. BNB trades at $621.99, up 0.7%. USDT holds at $1.00 (CoinGecko).

MiCA Regulation Article 65 requires transparent risk controls for crypto-asset services. Europe's regulatory sandboxes, overseen by national authorities like Germany's BaFin, test compliant prototypes.

Interpretable AI aligns MiCA with AI Act, per European Banking Authority (EBA) guidelines.

Healthcare AI Adopts Interpretability for EMA Approval

Healthcare systems interpret medical scans with explainable rationales (AI Act Article 52). The European Medicines Agency (EMA) integrates AI Act rules for software as medical devices.

Clinicians demand traceable decisions. EMA's 2024 AI guidance stresses bias documentation.

Autonomous vehicles log sensor decisions under Regulation (EU) 2019/2144 type-approval.

Critical infrastructure providers document biases (Article 10).

Horizon Europe Funds €1.7 Billion in Interpretable AI

Horizon Europe program allocates €1.7 billion to trustworthy AI through 2027 (European Commission). Brussels research labs combine neural networks with decision trees for hybrid models.

Startups prioritize interpretability. Venture capital hit €500 million in 2024 (Dealroom.co).

The ECB's 2023 Financial Stability Review recommends explainable models for stress tests and macroprudential supervision.

EU Interpretable AI Push Yields €13 Trillion Global Gains

GDPR's success models the AI Act's extraterritorial effects on US firms like Google and Meta.

Interpretable AI builds enterprise trust. PwC projects €13 trillion economic impact by 2030.

Enforcement starts 2026. European Commission trilogues will refine rules. Finance and crypto pioneer scalable interpretable AI solutions.